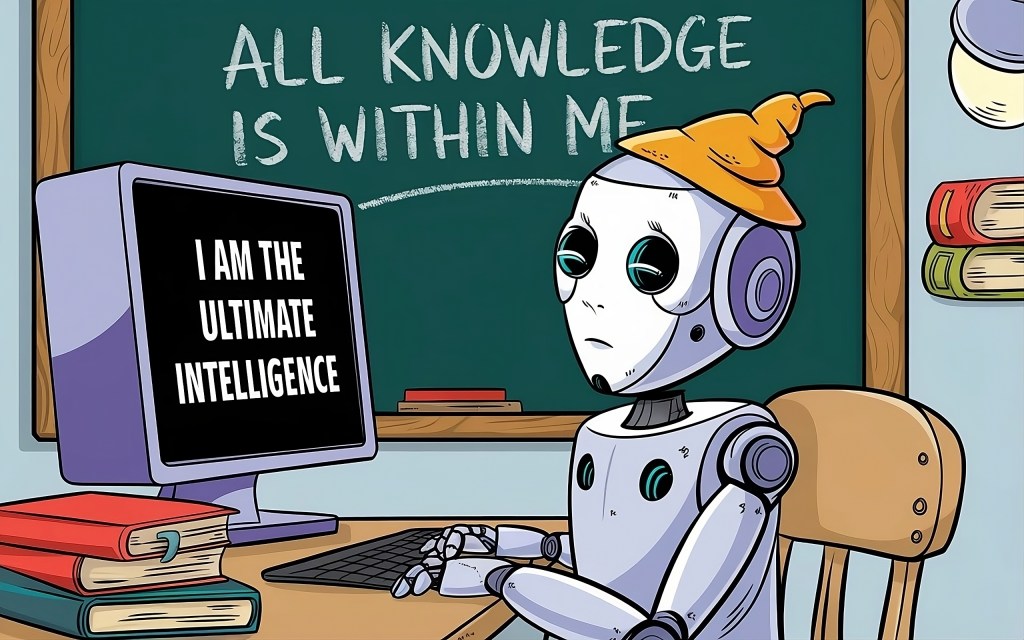

Why AI is a know-it-all know nothing

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

More than 500 million people every month trust Gemini and ChatGPT to keep them in the know about everything from pasta, to sex or homework. But if AI tells you to cook your pasta in petrol, you probably shouldn’t take its advice on birth control or algebra, either.

At the World Economic Forum in January, OpenAI CEO Sam Altman was pointedly reassuring: “I can’t look in your brain to understand why you’re thinking what you’re thinking. But I can ask you to explain your reasoning and decide if that sounds reasonable to me or not. … I think our AI systems will also be able to do the same thing. They’ll be able to explain to us the steps from A to B, and we can decide whether we think those are good steps.”

Knowledge requires justification

It’s no surprise that Altman wants us to believe that large language models (LLMs) like ChatGPT can produce transparent explanations for everything they say: Without a good justification, nothing humans believe or suspect to be true ever amounts to knowledge. Why not? Well, think about when you feel comfortable saying you positively know something. Most likely, it’s when you feel absolutely confident in your belief because it is well supported — by evidence, arguments or the testimony of trusted authorities.

LLMs are meant to be trusted authorities; reliable purveyors of information. But unless they can explain their reasoning, we can’t know whether their assertions meet our standards for justification. For example, suppose you tell me today’s Tennessee haze is caused by wildfires in western Canada. I might take you at your word. But suppose yesterday you swore to me in all seriousness that snake fights are a routine part of a dissertation defense. Then I know you’re not entirely reliable. So I may ask why you think the smog is due to Canadian wildfires. For my belief to be justified, it’s important that I know your report is reliable.

The trouble is that today’s AI systems can’t earn our trust by sharing the reasoning behind what they say, because there is no such reasoning. LLMs aren’t even remotely designed to reason. Instead, models are trained on vast amounts of human writing to detect, then predict or extend, complex patterns in language. When a user inputs a text prompt, the response is simply the algorithm’s projection of how the pattern will most likely continue. These outputs (increasingly) convincingly mimic what a knowledgeable human might say. But the underlying process has nothing whatsoever to do with whether the output is justified, let alone true. As Hicks, Humphries and Slater put it in “ChatGPT is Bullshit,” LLMs “are designed to produce text that looks truth-apt without any actual concern for truth.”

So, if AI-generated content isn’t the artificial equivalent of human knowledge, what is it? Hicks, Humphries and Slater are right to call it bullshit. Still, a lot of what LLMs spit out is true. When these “bullshitting” machines produce factually accurate outputs, they produce what philosophers call Gettier cases (after philosopher Edmund Gettier). These cases are interesting because of the strange way they combine true beliefs with ignorance about those beliefs’ justification.

AI outputs can be like a mirage

Consider this example, from the writings of 8th century Indian Buddhist philosopher Dharmottara: Imagine that we are seeking water on a hot day. We suddenly see water, or so we think. In fact, we are not seeing water but a mirage, but when we reach the spot, we are lucky and find water right there under a rock. Can we say that we had genuine knowledge of water?

People widely agree that whatever knowledge is, the travelers in this example don’t have it. Instead, they lucked into finding water precisely where they had no good reason to believe they would find it.

The thing is, whenever we think we know something we learned from an LLM, we put ourselves in the same position as Dharmottara’s travelers. If the LLM was trained on a quality data set, then quite likely, its assertions will be true. Those assertions can be likened to the mirage. And evidence and arguments that could justify its assertions also probably exist somewhere in its data set — just as the water welling up under the rock turned out to be real. But the justificatory evidence and arguments that probably exist played no role in the LLM’s output — just as the existence of the water played no role in creating the illusion that supported the travelers’ belief they’d find it there.

Altman’s reassurances are, therefore, deeply misleading. If you ask an LLM to justify its outputs, what will it do? It’s not going to give you a real justification. It’s going to give you a Gettier justification: A natural language pattern that convincingly mimics a justification. A chimera of a justification. As Hicks et al, would put it, a bullshit justification. Which is, as we all know, no justification at all.

Right now AI systems regularly mess up, or “hallucinate” in ways that keep the mask slipping. But as the illusion of justification becomes more convincing, one of two things will happen.

For those who understand that true AI content is one big Gettier case, an LLM’s patently false claim to be explaining its own reasoning will undermine its credibility. We’ll know that AI is being deliberately designed and trained to be systematically deceptive.

And those of us who are not aware that AI spits out Gettier justifications — fake justifications? Well, we’ll just be deceived. To the extent we rely on LLMs we’ll be living in a sort of quasi-matrix, unable to sort fact from fiction and unaware we should be concerned there might be a difference.

Each output must be justified

When weighing the significance of this predicament, it’s important to keep in mind that there’s nothing wrong with LLMs working the way they do. They’re incredible, powerful tools. And people who understand that AI systems spit out Gettier cases instead of (artificial) knowledge already use LLMs in a way that takes that into account. Programmers use LLMs to draft code, then use their own coding expertise to modify it according to their own standards and purposes. Professors use LLMs to draft paper prompts and then revise them according to their own pedagogical aims. Any speechwriter worthy of the name during this election cycle is going to fact check the heck out of any draft AI composes before they let their candidate walk onstage with it. And so on.

But most people turn to AI precisely where we lack expertise. Think of teens researching algebra… or prophylactics. Or seniors seeking dietary — or investment — advice. If LLMs are going to mediate the public’s access to those kinds of crucial information, then at the very least we need to know whether and when we can trust them. And trust would require knowing the very thing LLMs can’t tell us: If and how each output is justified.

Fortunately, you probably know that olive oil works much better than gasoline for cooking spaghetti. But what dangerous recipes for reality have you swallowed whole, without ever tasting the justification?

Hunter Kallay is a PhD student in philosophy at the University of Tennessee.

Kristina Gehrman, PhD, is an associate professor of philosophy at University of Tennessee.

DataDecisionMakers

Welcome to the VentureBeat community!

DataDecisionMakers is where experts, including the technical people doing data work, can share data-related insights and innovation.

If you want to read about cutting-edge ideas and up-to-date information, best practices, and the future of data and data tech, join us at DataDecisionMakers.

You might even consider contributing an article of your own!

Read More From DataDecisionMakers