Researchers at Heriot-Watt University and Alana AI Propose FurChat: A New Embodied Conversational Agent Based on Large Language Models

Large Language Models(LLMs) have taken center stage in a world where technology is making leaps and bounds. These LLMs are incredibly sophisticated computer programs that can understand, generate, and interact with a human language in a remarkably natural way. In recent research, an innovative embodied conversational agent known as FurChat has been unveiled. LLMs like GPT-3.5 have pushed the boundaries of what’s possible in natural language processing. They can understand context, answer questions, and even generate text that feels like it is written by a normal human being. This powerful capability has opened doors to countless opportunities in various domains like robotics.

Researchers at Heriot-Watt University and Alana AI Propose FurChat, a revolutionary system that can function as a receptionist, engage in dynamic conversions, and convey emotions through facial expressions. Furchat’s deployment at the National Robotarium exemplifies its transformative potential, facilitating natural conversations with visitors and offering various information on facilities, news, research, and upcoming events.

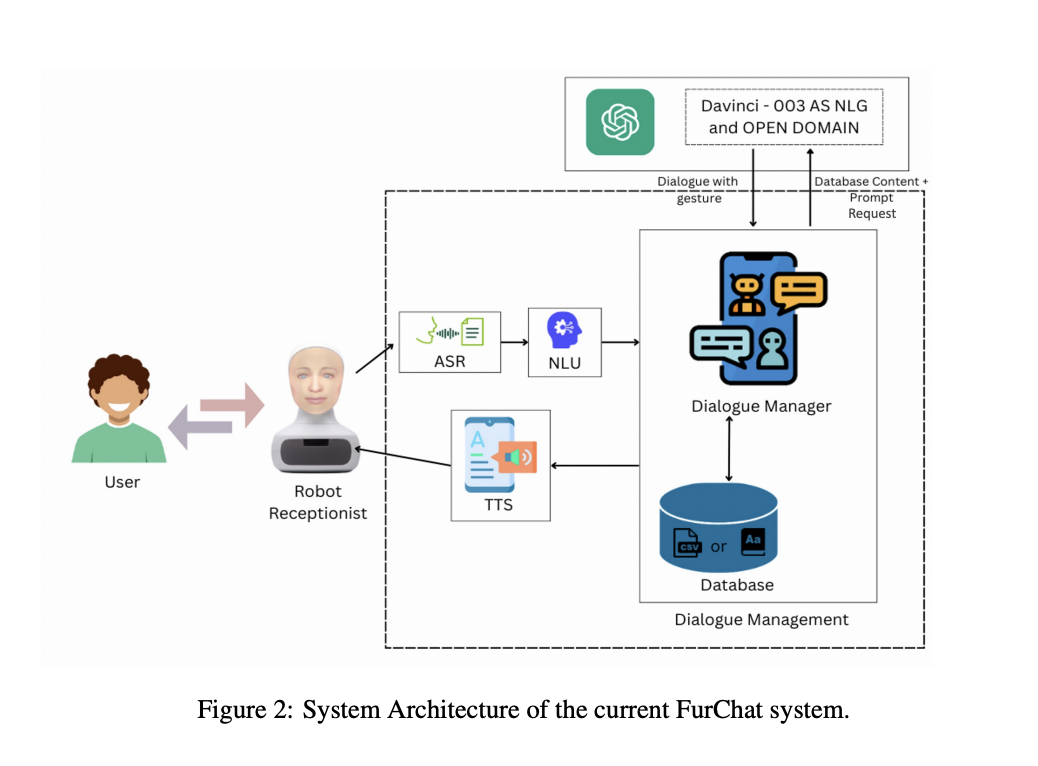

Furhat robot, a humanoid robotic bust has a three-dimensional mask that closely resembles a human face and employs a micro projector to project an animated facial expression onto this mask. The robot is mounted on a monitored platform that allows its head to move and nod, enhancing its lifelike interactions. To facilitate communication, Furhat is equipped with a microphone array and speakers, enabling it to recognize and respond to human speech.

Its system is designed for seamless applications. Dialogue Management involves three main components: NLU, DM, and a custom database. NLU analyzes incoming text, classifies intents, and assesses confidence. DM maintains conversational flow, sends prompts to LLM, and processes responses. A custom database is created by web-scraping the Nation Robotarium’s website, which provides data relevant to user intents. Prompt engineering ensures natural responses from LLM. It combines a few shot-learning and prompt-learning techniques to generate context-aware replies. Gesture parsing leverages Furhat SDK’s facial gestures and LLM’s sentiment recognition from text to synchronize facial expressions with speech, creating an immersive interaction. Amazon Polly is used for text-to-speech conversion, which is available in FurhatOS.

In the future, researchers are gearing up to expand its capabilities. They have their sights set on enabling multiuser interactions, an area of active research in the field of receptionist robots. Furthermore, to tackle the issue posed by hallucinations in language models, they plan to explore strategies such as finetuning the language model and experimenting with direct conversation generation, reducing reliance on NLU components. A significant milestone for the researchers is the demonstration of FurChat at the Sigdial conference. It will serve as a platform to demonstrate the system’s capabilities to a broader audience of peers and experts.

Check out the Paper. All Credit For This Research Goes To the Researchers on This Project. Also, don’t forget to join our 30k+ ML SubReddit, 40k+ Facebook Community, Discord Channel, and Email Newsletter, where we share the latest AI research news, cool AI projects, and more.

If you like our work, you will love our newsletter..

Astha Kumari is a consulting intern at MarktechPost. She is currently pursuing Dual degree course in the department of chemical engineering from Indian Institute of Technology(IIT), Kharagpur. She is a machine learning and artificial intelligence enthusiast. She is keen in exploring their real life applications in various fields.